Member-only story

AI-Generated Bullshit Is A Challenge To Our “Vigilance”

What ChatGPT has in common with magazine copy-editing

I once knew a copy-editor who read all her stories backwards.

She said it forced her to slow down, so she’d catch more mistakes.

Cool technique, eh?

Let’s put a pin in that for now.

I’ll come back to it, though, because it relates to something that’s been happening quite a bit lately:

Humans are being duped by AI-generated bullshit.

Last week, Google showed off “Bard”, an “experimental conversational AI service.” The chatbot is powered by LaMDA, a large language model — and Bard is basically Google’s attempt to catch up to OpenAI and ChatGPT. Google is clearly panicked that conversational AI will become a new interface for everything, and could dethrone its search engine.

To show off Bard and demonstrate how smoothly it works, Google executives posed it this question: “What new discoveries from the James Webb Telescope can I tell my nine-year-old about?”

Google proudly posted the AI’s reply on Twitter …

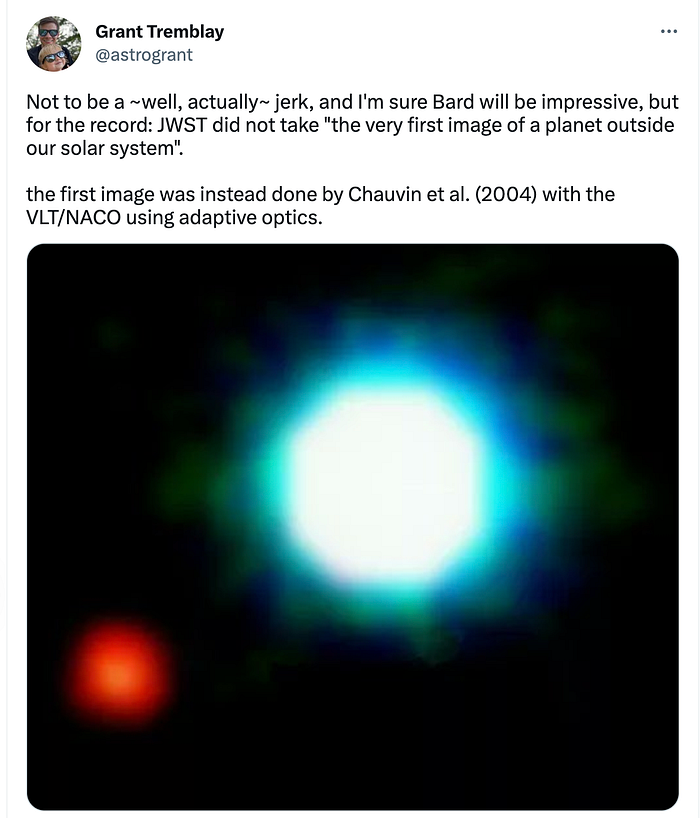

The problem? That third fact — “JWST took the very first pictures of a planet outside of our own solar system” — is flat-out wrong.

The astrophysicist Grant Tremblay quickly pointed this out …

A few weeks earlier, CNET fell into its own AI swamp. It turned out that CNET had published 77 stories written by an “internally-designed” AI tool — fully 41 of which contained errors, including in pieces that were purporting to offer personal-finance advice. Derp.

Why didn’t anyone notice these errors? I mean, we’re talking about CNET — a publication that has actual, human editors — and Google, a multi-billion-dollar firm that was proudly showing off its new AI.